With the disruptive arrival of the Apple Silicon Macs, the industry saw a push away from TDP-be-damned space heater hardware to more sensible performance-per-watt targets. The Apple M1, M2 and its mobile counterparts all feature two main general purpose core types: performance and efficiency. Intel’s recent offerings are positively stuffed full of efficiency cores, and other players are following suit.

That however poses a challenge for developers. It’s now harder than ever to light up all those transistors and produce efficient software:

Race to sleep: The faster your software runs, the less power it consumes. […] We’re trying to actually engage the entire chip, the entire piece of hardware to do a computation, in order to do that computation as rapidly and effectively as possible.

– Chandler Carruth, “Efficiency with Algorithms, Performance with Data Structures”

This is pretty good advice. However, simply saturating all cores (or threads) your program can access is no longer a good strategy, as modern silicon throws two wrenches in our works:

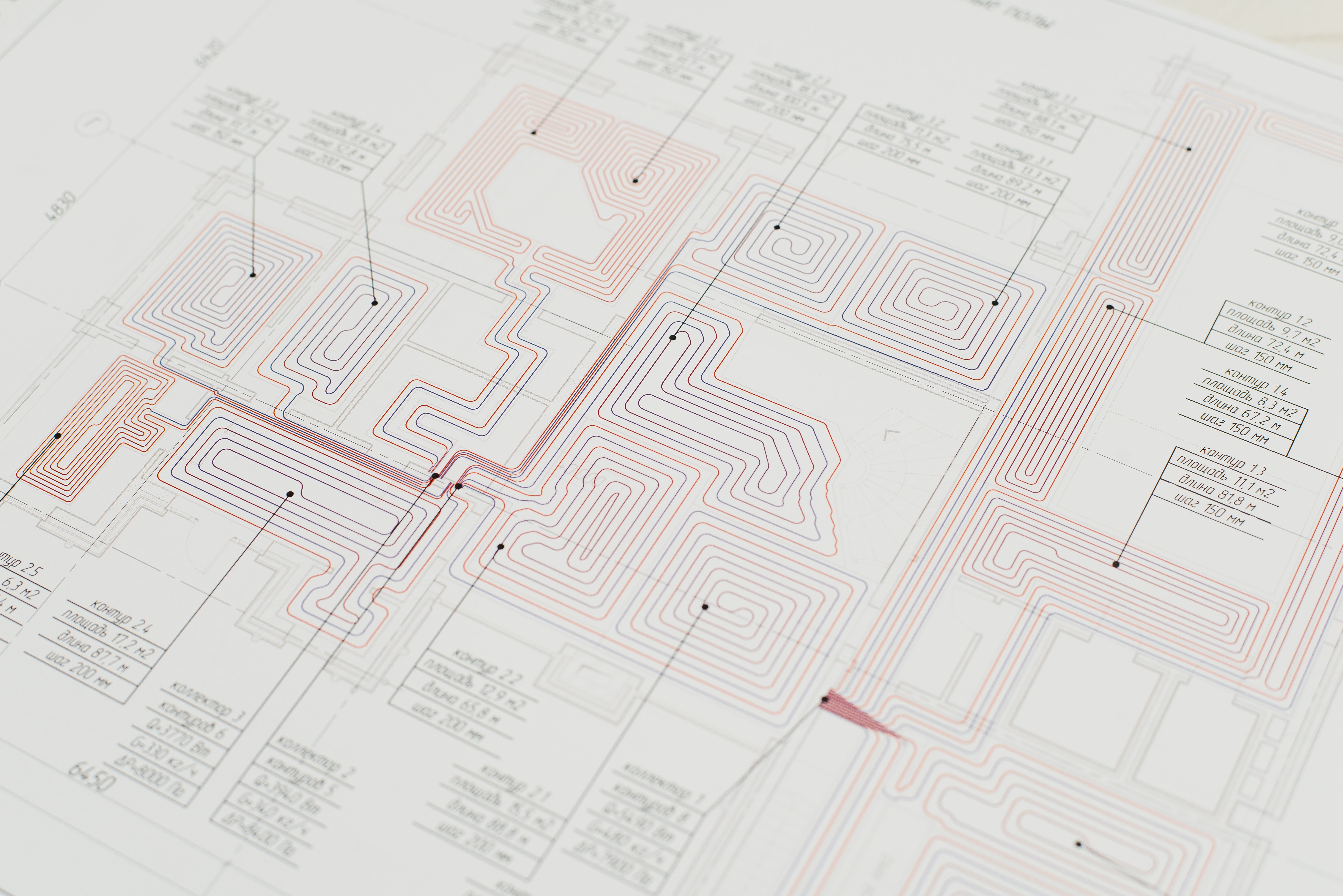

First, chip design tends to capture common algorithms in hardware, rendering entire classes of general-purpose-CPU implementations obsolete. Whereas you might have previously used optimized libraries for matrix multiplication, or even GPGPU, you’re now better off turning that work over to system frameworks and have them schedule it on specialized cores within the main chip. On Apple Silicon, there are no less than four different tuned hardware implementations for this purpose: CPU-bound ARM NEON, Apple AMX (a semi-secret matrix instruction set), Apple Neural Engine (dedicated few-big cores), and the GPU itself. ANE implementations for deep learning inference outperform implementations using Apple Accelerate (NEON/AMX) by almost 300%!

Matrix multiplication is still a pretty general use case though and more specific co-processors exist for media encoding and decoding, crypto, real-time image processing and more. But what if you do have a workload that isn’t a good fit for hardware acceleration? If your M1 Ultra reports 20 CPU cores, scheduling 20 threads should be able to use “the entire piece of hardware”?

Sure enough, you can do that and you will see 100% utilization across all cores (let’s assume your workload is data-parallel and your algorithm is optimal, so no time wasted on IO and cache swaps). But taking our earlier example of the 20-core M1, your program will run virtually as fast using 20 threads as it would using 16. Why is that?

The difference is caused by the efficiency cores. For normal software, which executes workloads that are both short-lived and small as well as longer-running intense workloads, using all threads isn’t a problem. The easy stuff can be scheduled on efficiency cores (e.g. keeping the UI responsive), while processing can be scheduled on the performance cores. That’s why e.g. Logic allows you to go all in:

But if all your threads are coordinating the same, intense workload, using both efficiency and performance cores will cause the clock-speed of performance cores to be dragged down. The consequences are almost non-existent gains of performance compared to just using the performance cores, and a risk of system lag as the system UI itself and background services are choked by the inappropriate load on the efficiency cores.

Instead, your program should be aware of the number of cores available at the appropriate performance level. On macOS, this can be queried using sysctl:

$ sysctl hw.perflevel0.logicalcpu_max

hw.perflevel0.logicalcpu_max: 8

Here perflevel0 is “performance”, and perflevel1 would be efficiency.